Introduction

Anthropic has just released Claude Haiku 4.5.

The Claude family consists of three models with different parameter sizes: Claude Opus (large), Sonnet (medium), and Haiku (small). The major highlight of this update is that the small Claude Haiku 4.5 maintains high performance while being faster and cheaper.

Five months ago, Claude Sonnet 4 was one of the most advanced models. Now, the newly released Haiku 4.5 nearly matches its coding performance but costs only one-third of the price and is over twice as fast.

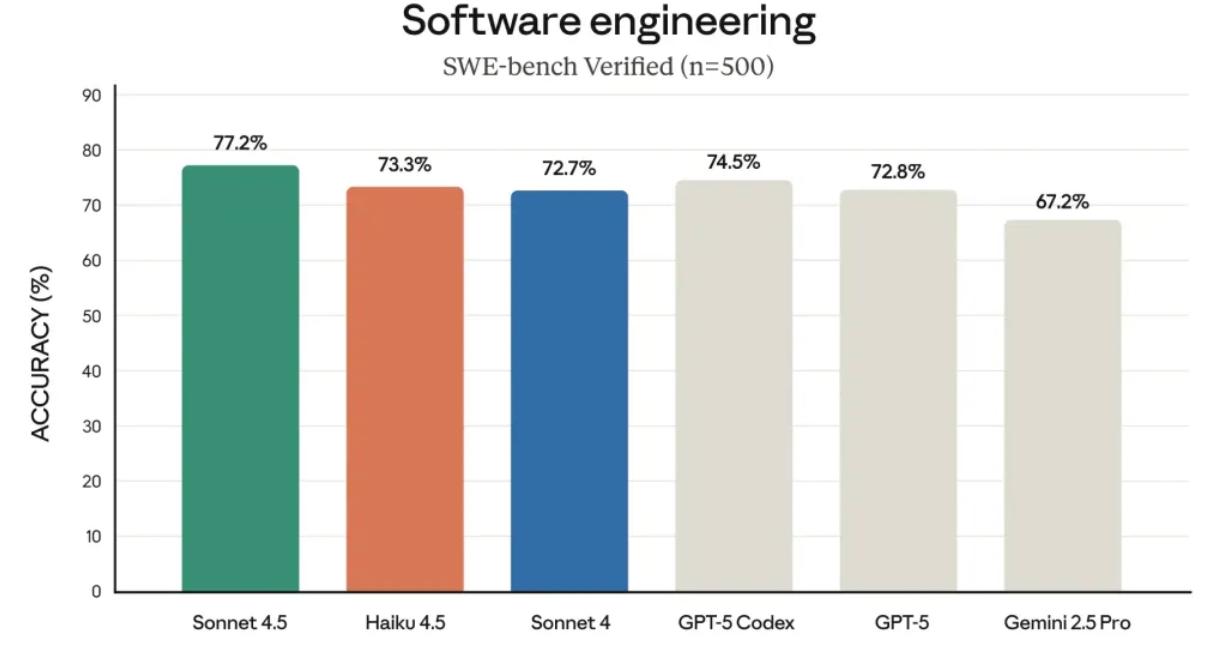

Specifically, on the SWE-bench Verified test set, which measures AI coding abilities, Haiku 4.5 achieved a score of 73%. What does this mean? It stands on par with Claude Sonnet 4 and OpenAI’s latest GPT-5. In certain tasks, such as controlling a computer, Haiku 4.5 even outperformed its older sibling, Sonnet 4.

For scenarios requiring AI to handle real-time, low-latency tasks—such as chat assistants, customer service agents, or pair programming assistants—Haiku 4.5 combines high intelligence with excellent speed, providing a better experience.

Developers using Claude Code will find that Haiku 4.5 makes the entire programming process—from multi-agent collaboration to rapid prototyping—much more responsive and efficient.

Of course, the Sonnet 4.5 released two weeks ago remains Anthropic’s flagship model, belonging to the top tier of global programming models. However, Haiku 4.5 offers another option: performance close to the top model at a much more affordable price.

Moreover, the model’s capabilities are more versatile; Sonnet 4.5 can break complex problems into N smaller tasks and coordinate multiple Haiku 4.5 models to work in parallel, creating a highly effective collaboration.

Anthropic has conducted thorough safety and alignment testing on Haiku 4.5. The results show a lower incidence of undesirable behavior compared to its predecessor, Haiku 3.5, with significantly improved alignment. In automated alignment assessments, Haiku 4.5 exhibited fewer overall deviations than Sonnet 4.5 and Opus 4.1.

This means it is currently Anthropic’s safest model.

Pricing for Haiku 4.5 is set at $1 per million input tokens and $5 per million output tokens. In comparison, GPT-5 mini costs about $0.25 per million input tokens and $2.5 per million output tokens, while Google’s Gemini 2.5 Flash is similarly priced. Thus, Haiku 4.5 is approximately four times the price of GPT-5 mini or Flash.

However, compared to Sonnet 4.5, it is about three times cheaper, with nearly no difference in performance, making it a cost-effective option for developers.

That said, math is not its strong suit.

Notable blogger Dan Shipper found that Haiku can be a bit… confused with arithmetic. For example, in a test involving an Uber bill, Haiku perfectly identified all relevant emails but failed to calculate the total amount correctly. More embarrassingly, after acknowledging the mistake, it repeated the same error.

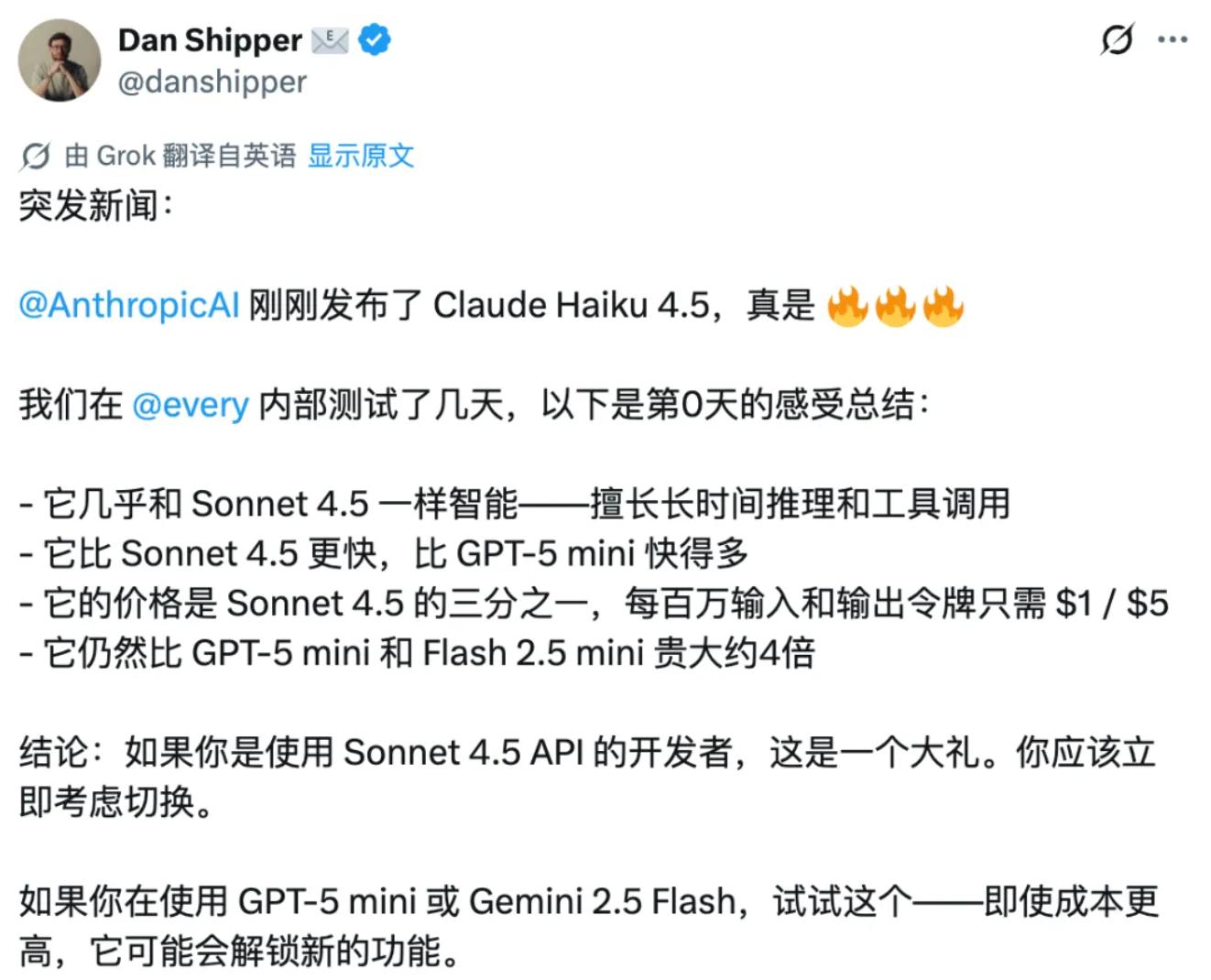

Dan Shipper’s candid assessment is:

If you are a developer or entrepreneur building complex intelligent agent applications with Sonnet 4.5, you should consider switching to Haiku. You can save a lot of costs while experiencing nearly negligible performance loss.

If you are currently using Gemini 2.5 Flash or GPT-5 mini, you should try Haiku. Although it is slightly more expensive, it performs better in scenarios requiring tool invocation and autonomy.

Currently, Claude Haiku 4.5 is available in Claude Code and various applications. Developers can use Haiku 4.5 through the Claude API, Amazon Bedrock, and Google Cloud’s Vertex AI, directly replacing Haiku 3.5 and Sonnet 4, with pricing being the most attractive from Anthropic.

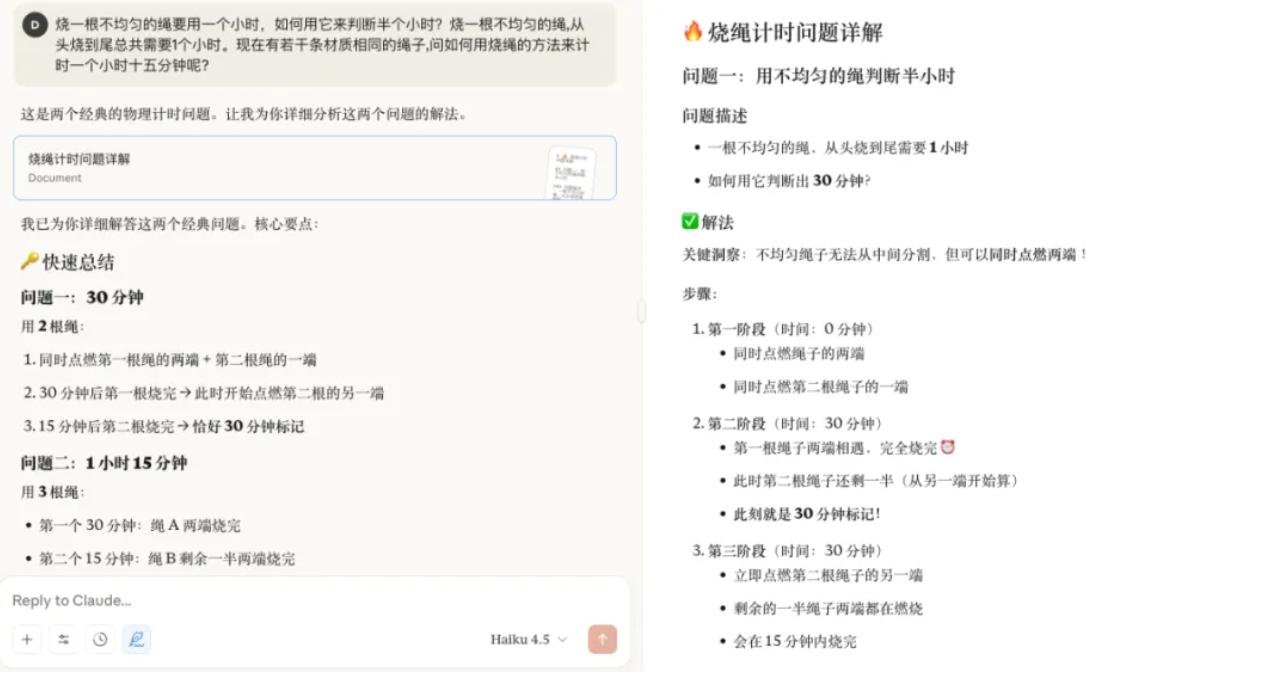

We referenced @zb1992’s prompts and ran a clock demo with Claude 4.5 Haiku. The overall experience showed that the code generation speed is indeed faster, and the final product is quite satisfactory.

In the classic reasoning calculation problem below, the speed advantage of Claude 4.5 Haiku is even more evident, which is precisely the core competitive strength of lightweight models in practical applications.

Additionally, according to The Information, Anthropic, valued at $170 billion, has informed investment banks in recent weeks of plans to acquire more technical talent while expanding capabilities beyond programming assistants—after all, programming remains a significant revenue source.

Insiders indicate that given Anthropic’s success in providing programming-related AI products, the company may next expand into other commonly used software tools for developers, such as automated code vulnerability testing tools or software design assistance tools. There are also reports that Anthropic may pursue acquisitions aimed at developing products for specific industries, such as financial services, healthcare, or cybersecurity, though they prefer smaller acquisitions under $500 million.

It appears that while enhancing model capabilities, Anthropic is also actively laying out its ecosystem. In the competitive AI landscape, the ultimate beneficiaries are developers and users—stronger models, lower prices, and more choices.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.