Claude Surges to Top of App Store

Claude has unexpectedly climbed to the top of the U.S. App Store, driven by a direct connection to the conflict between Anthropic and the Pentagon.

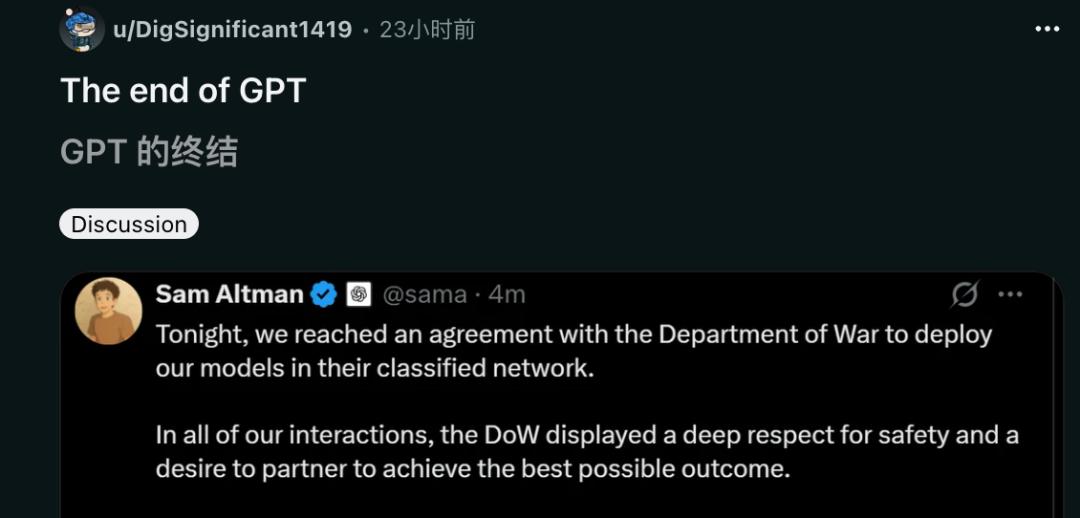

In a surprising turn of events, OpenAI employees signed a joint letter against the company, only for OpenAI to announce a defense contract with the Pentagon shortly after.

Some OpenAI employees, not aligned with this decision, chose to resign. This action triggered a massive backlash against OpenAI, leading to a widespread movement to boycott ChatGPT.

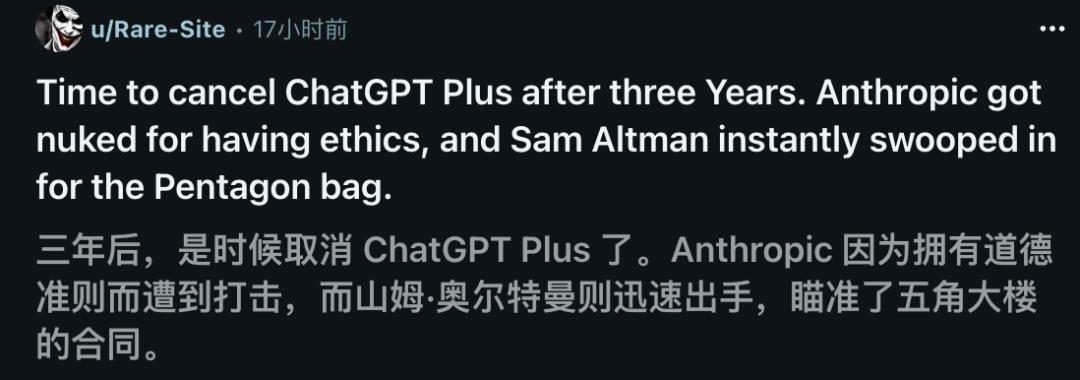

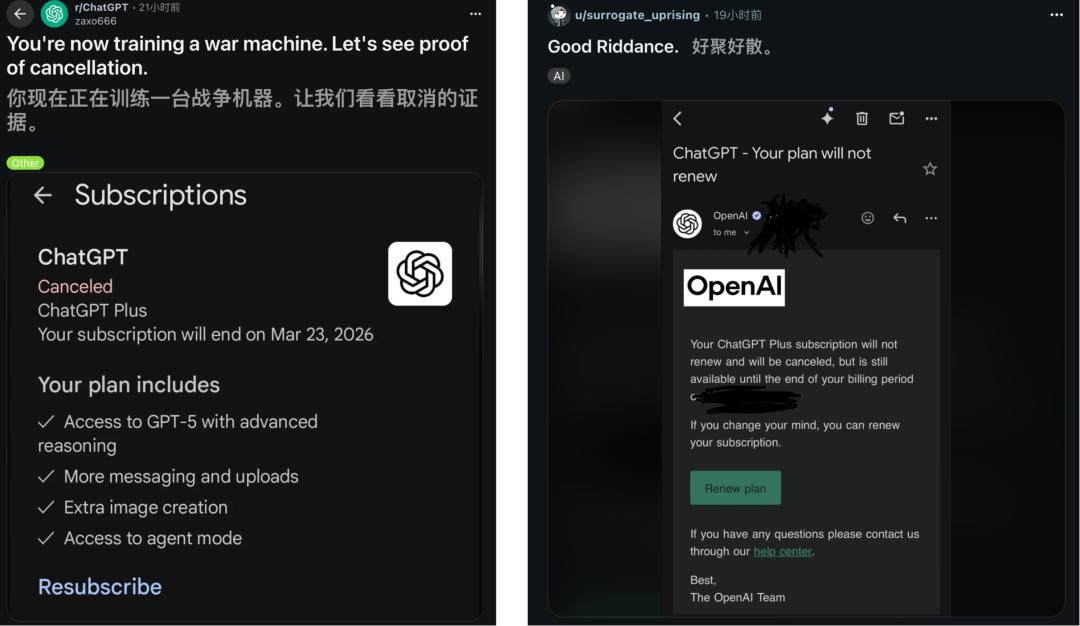

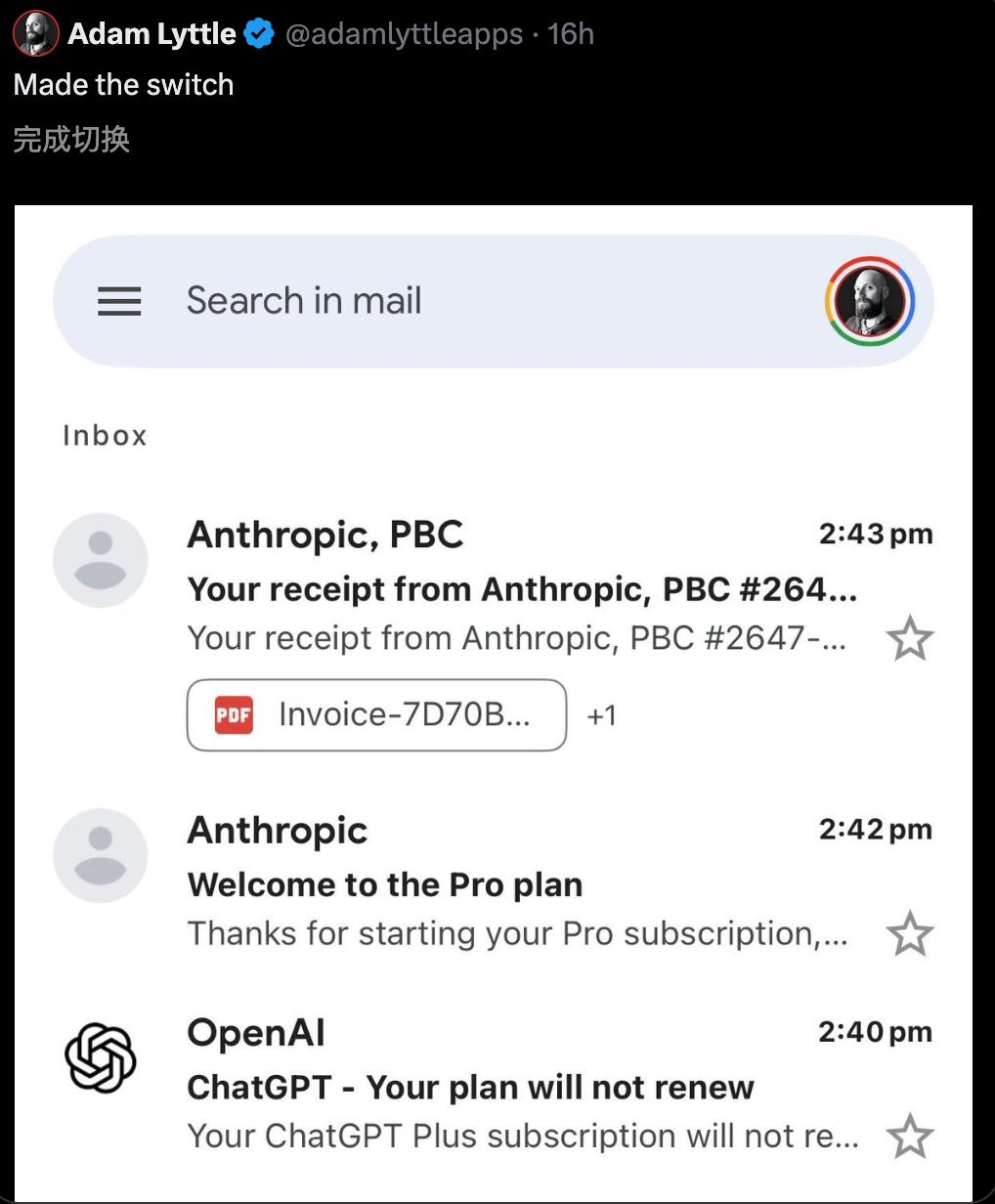

Currently, on platforms like Reddit and X, canceling ChatGPT subscriptions and switching to Claude has become the norm.

In this fierce competition, Anthropic lost a $200 million deal, while OpenAI faced significant public outrage.

Dario Amodei, CEO of Anthropic, made his first public appearance after a 24-hour ban, expressing his exhaustion and discussing the challenges faced during negotiations, emphasizing their unyielding principles.

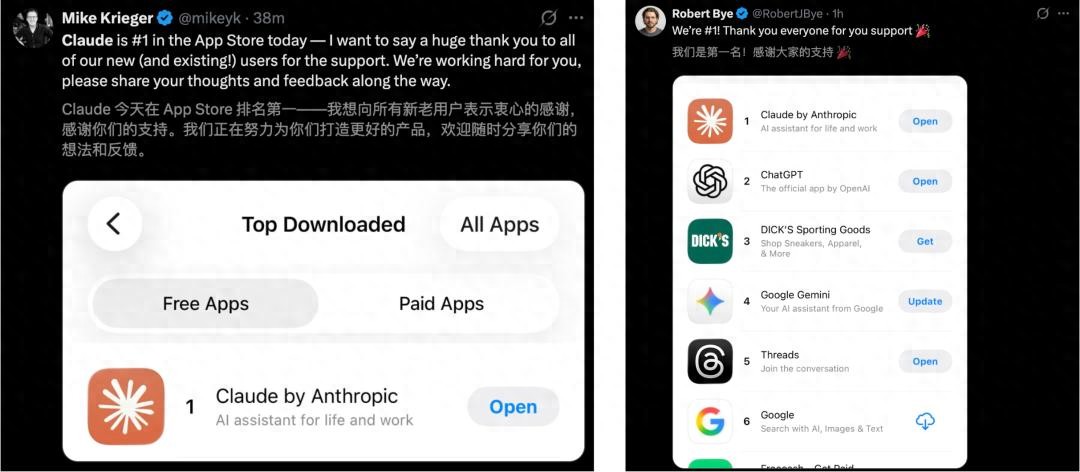

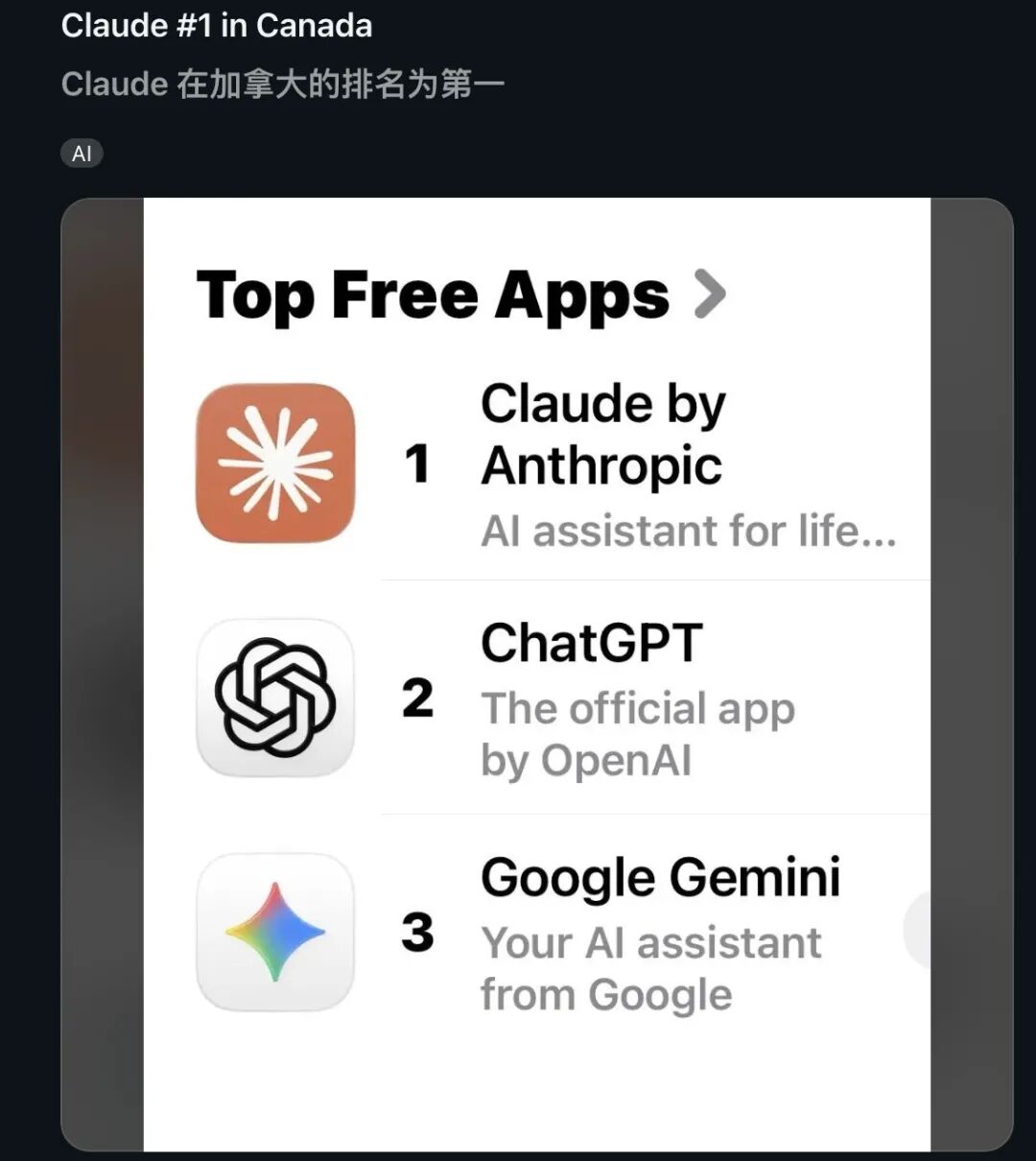

Claude’s Explosive Growth

Claude’s popularity has skyrocketed globally. It not only topped the U.S. App Store but also claimed the number one spot in the Canadian App Store.

According to SensorTower data, Claude’s rise is remarkable. At the end of January, it was outside the top 100, and throughout February, it hovered around the 20th position. However, in just a few days, it shot up to 6th place on Wednesday, 4th on Thursday, and clinched the top spot by Saturday.

The top three spots in the App Store are now dominated by AI giants: Claude, ChatGPT, and Gemini.

The catalyst for this surge was the breakdown of negotiations between Anthropic and the Pentagon. The Pentagon’s ultimatum expired yesterday, and Anthropic not only refused to compromise but reiterated their two firm principles:

- No large-scale surveillance

- No development of autonomous weapons

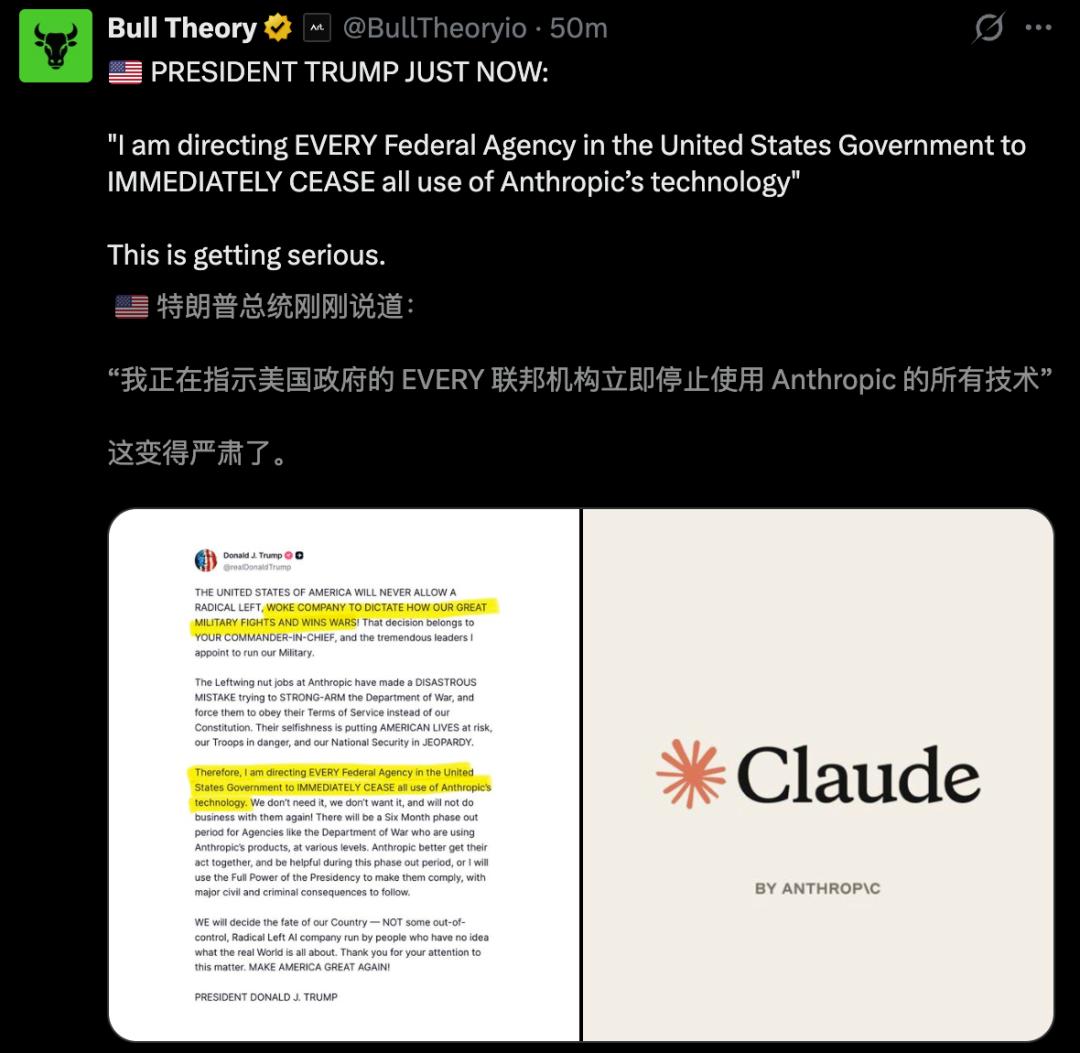

This stance angered the White House, leading Trump to order a complete ban on Claude, labeling it a “supply chain threat.”

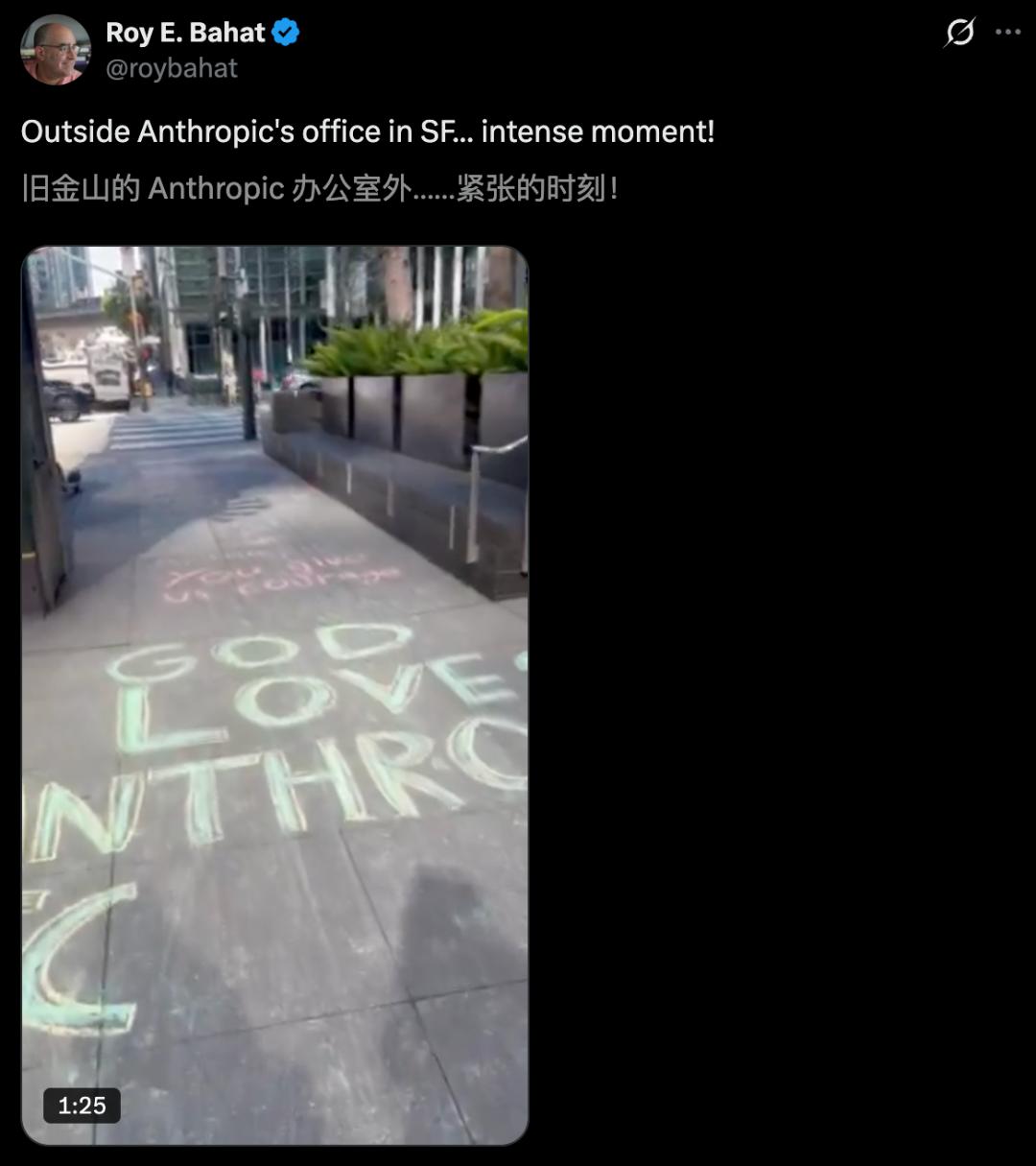

However, many in the public praised Anthropic’s approach, unexpectedly benefiting from a surge in support. People even took to the streets outside their San Francisco office, chalking messages of love and gratitude, including “Thank you for not creating Skynet.”

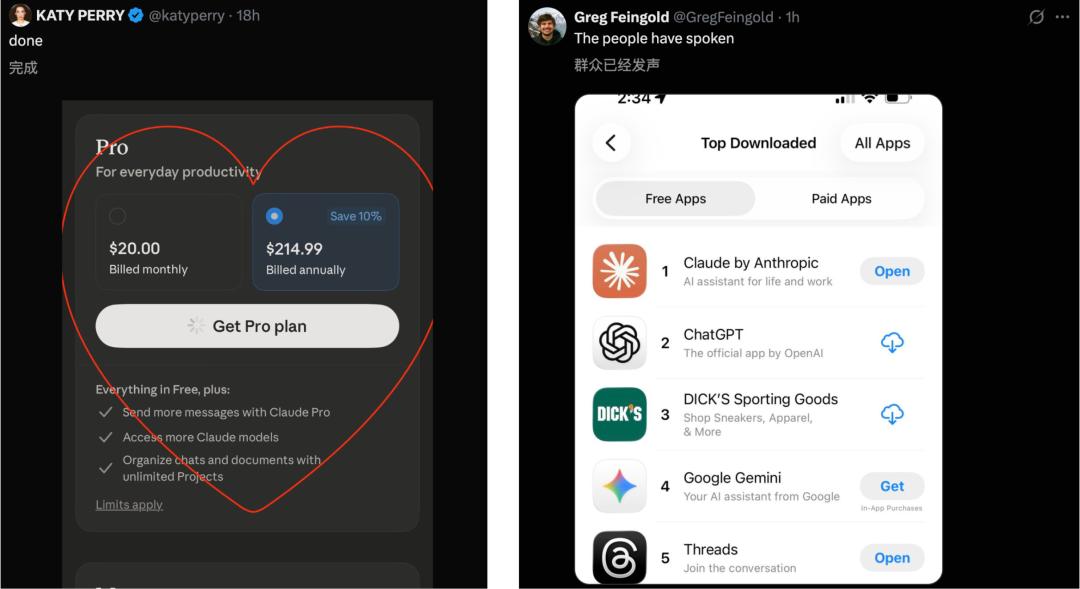

A significant number of users showed their support for Claude through direct actions—downloading and subscribing to the app.

Public Outrage Against OpenAI

In this critical showdown over AI ethics, Silicon Valley witnessed a dramatic “Tale of Two Cities.” While Anthropic faced a ban, its rival OpenAI quickly filled the void by announcing a deal with the Pentagon.

In a morning announcement, OpenAI laid out their commitments regarding the deal, claiming to uphold the same “red lines” as Anthropic:

- No large-scale domestic surveillance;

- No leading autonomous weapon systems;

- No high-risk automated decision-making.

Despite OpenAI’s attempts to present a peaceful facade on social media, government officials quickly exposed this illusion. The result was a tidal wave of protests against OpenAI, with numerous users sharing screenshots of their canceled ChatGPT Plus subscriptions, accusing the company of abandoning its mission to “benefit humanity.”

On Reddit, a flood of cancellation screenshots emerged, with angry users voting with their feet and viewing OpenAI as the “supervillain” of the tech world.

Some users even canceled their ChatGPT subscriptions in favor of Claude simultaneously.

Concerns about migrating ChatGPT chat histories led users to share solutions—exporting data (Settings > Data Control > Export) allows Memory Forge to convert it into a format readable by Claude.

A tutorial video for migration was also created on YouTube.

This boycott has dealt a significant blow to OpenAI.

Dario Amodei Speaks Out After the Ban

Following the ban, Dario Amodei, in an exclusive CBS interview, firmly defended Anthropic’s “red lines”:

No large-scale domestic surveillance and no weapon automation.

The Pentagon’s demand for unrestricted access led to the conflict, with Trump ordering federal agencies to ban Anthropic’s technology, categorizing it as a “supply chain risk.”

Amodei emphasized that dissent is patriotism and expressed willingness to cooperate but would not compromise on principles. Despite his fatigue, he maintained a clear stance:

We have two red lines that have existed since the founding of the company.

We still uphold these two red lines and will not back down on these issues.

When asked what he would like to say to the president, he responded without hesitation:

We are patriotic Americans. Everything we do is for this country.

Anthropic’s desire to use Claude for military purposes stems from their belief in America and their commitment to help combat personnel. They are the first AI lab to receive permission for classified military systems but cannot accept the Pentagon’s aggressive demands for unrestricted access to fully automated weapons and large-scale surveillance of American citizens.

To this end, Anthropic has drawn its “red lines,” with Amodei explaining the rationale behind them:

We believe that crossing these boundaries would violate American values, and we want to stand up for those values.

Trump’s abrupt actions led to a directive for federal agencies to gradually phase out Anthropic’s services over six months.

In response to this threat, Amodei viewed these measures as unprecedented interference in the private sector. They publicly expressed their opposition to the government’s actions, but the White House labeled Anthropic’s refusal as “un-American.”

Amodei’s response dismantled this narrative, stating, “Disagreeing with the government is the most American thing in the world.”

He noted that large-scale surveillance poses risks because AI can enable actions that were previously impossible, and the technology’s capabilities are “outpacing the law.”

In theory, AI could also drive fully autonomous weapon systems—machines choosing targets and executing strikes without human intervention. Amodei clarified that Anthropic is not fundamentally opposed to such weapons, especially if adversarial nations develop similar systems; however, “current reliability is insufficient,” and “we must have serious discussions about regulation and oversight.”

Due to the inherent uncertainties and hallucinations of AI, Amodei fears that autonomous weapons may mistakenly target the wrong entities.

More importantly, unlike human-operated weapons, accountability for decisions made by fully autonomous systems is unclear.

He stated, “We do not want to sell products we deem unreliable, nor do we want to sell technology that could lead to the death of our personnel or innocent civilians.”

Amodei referred to the safeguards against surveillance and autonomous weapons as “narrow exceptions” and indicated that the company has no evidence showing the military has breached these red lines in practice.

The Pentagon, however, maintains that federal law already prohibits large-scale surveillance of American citizens.

Fully autonomous weapons are also subject to internal military policies, so there is no need to include these AI usage restrictions in contract texts.

This Thursday, the Pentagon’s Chief Technology Officer Emil Michael stated to the media, “To some extent, you have to trust the military to do the right thing.”

“To be prepared for the future,” Michael said, “we will never write in a contract that we cannot defend ourselves.”

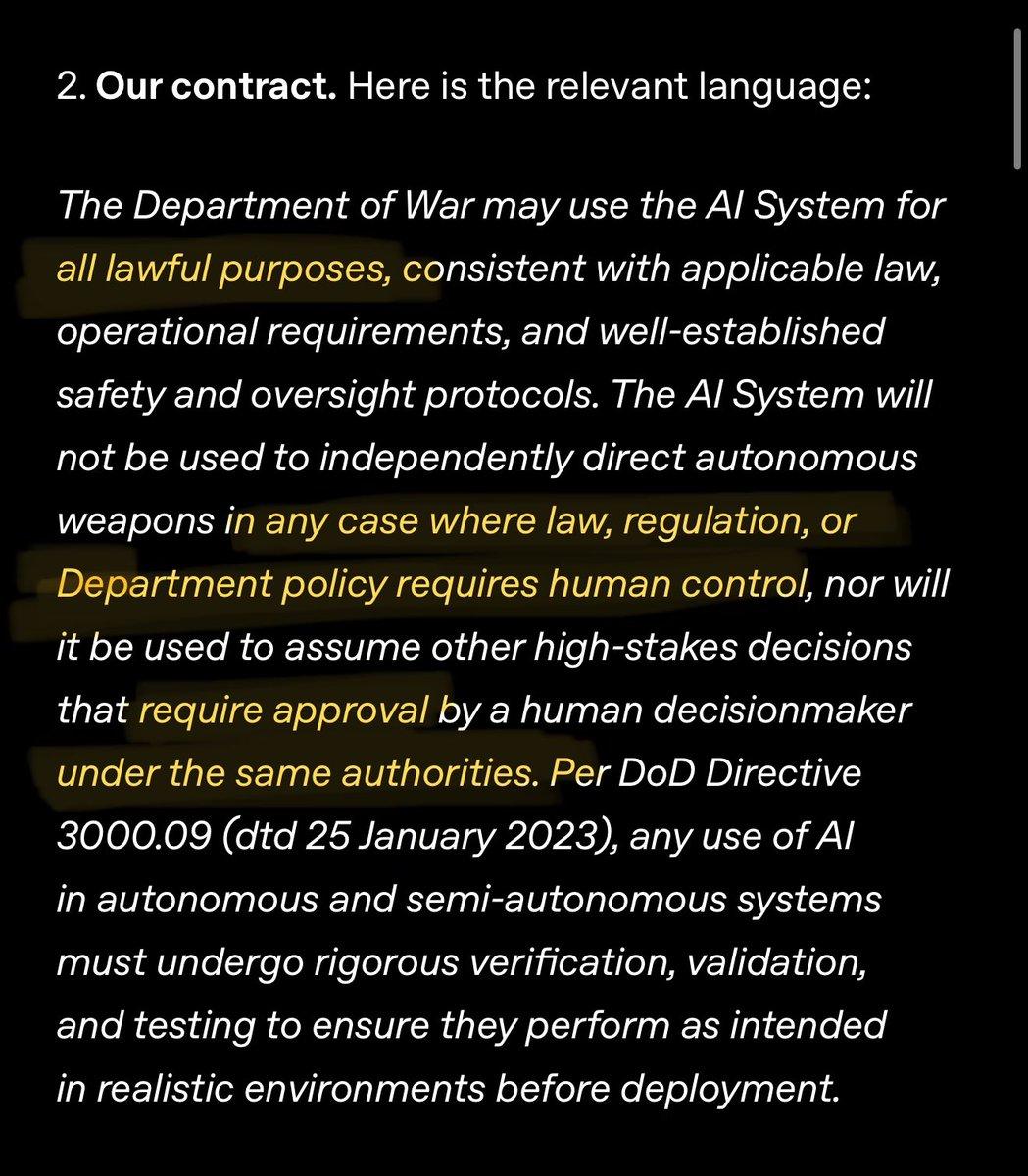

As a compromise, Michael mentioned that the military had proposed confirming in writing the restrictions on large-scale surveillance and autonomous weapons outlined in federal law and military policies.

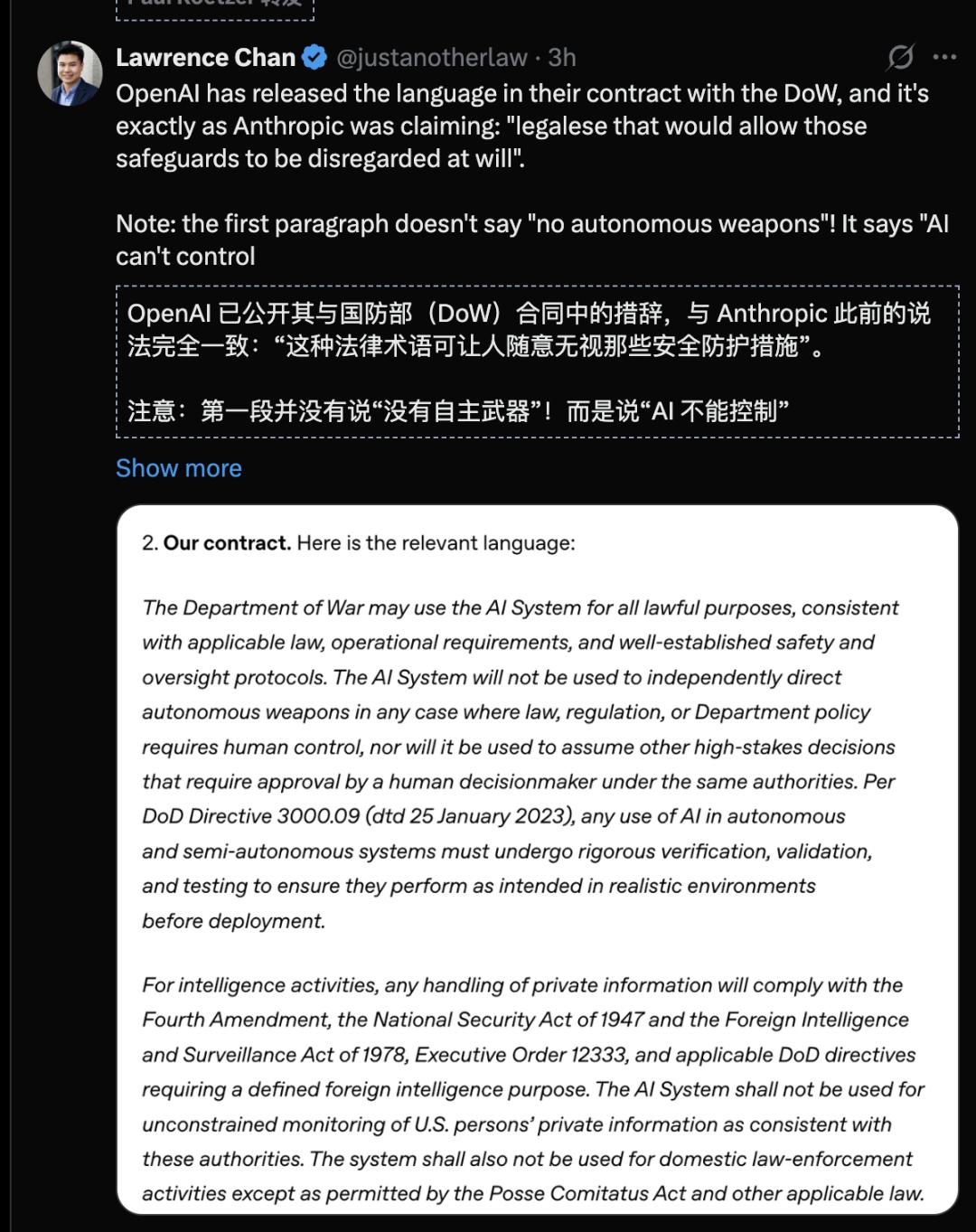

However, Anthropic responded that these commitments come with complex legal language, effectively leaving room to circumvent the safeguards.

Additionally, OpenAI has disclosed its contract with the Pentagon, confirming that Anthropic’s claims were not unfounded.

White House Discontent, Sympathy from Peers?

As the conflict between Anthropic and the Pentagon escalated, several military leaders accused the company and its CEO of attempting to impose their values on the government.

- U.S. Defense Secretary Hegseth labeled Anthropic as “self-righteous and arrogant”;

- U.S. Chief Technology Officer Michael claimed Amodei has a “God complex”;

- Trump referred to Anthropic as a “radical left, woke company.”

Hegseth accused them of having a clear goal—to gain veto power over U.S. military operational decisions, which is unacceptable.

When asked whether significant issues like AI safeguards should be decided by Anthropic rather than the government, Amodei replied:

One of the meanings of a free market and free enterprise is that different people can offer different products under different principles.

He added, “I believe we know best where our models are reliable and where they are not.”

In the long run, he suggested that Congress should intervene and regulate AI safety safeguards.

“But the pace of Congress is not the fastest in the world. And at this moment, we are at the forefront of this technology,” Amodei stated.

As both parties failed to reach an agreement by Friday, the military is expected to gradually cease using Anthropic’s AI technology over the next six months, opting for what Hegseth described as “better, more patriotic services.”

Hegseth also labeled Anthropic a “supply chain risk,” indicating that all companies doing business with the military are expected to cut ties with Anthropic.

Anthropic may find itself without resources, computing power, or funding, effectively choked by the White House and the Pentagon!

This is typically reserved for hostile nations or adversaries, making Anthropic the first American company to face such regulation.

Anthropic’s two red lines are not unreasonable; even OpenAI adheres to similar “red lines”:

OpenAI also believes that categorizing Anthropic as a “supply chain risk” is unwarranted and has clearly expressed this position to the Pentagon.

It seems that OpenAI and Anthropic’s requests are quite similar; why was Anthropic rejected?

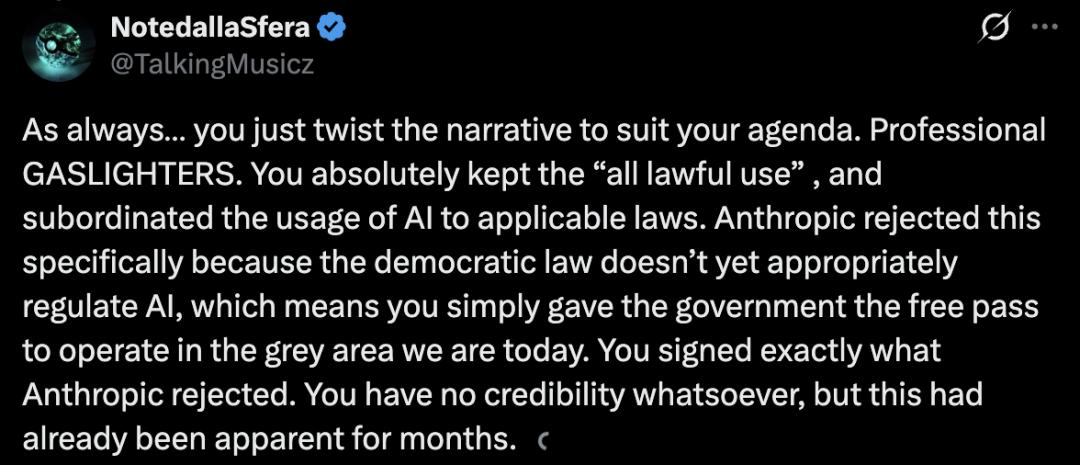

Because OpenAI retained the clause of “all lawful uses” and placed AI usage under applicable laws, while Anthropic explicitly rejected this.

Current U.S. laws have not adequately regulated AI, meaning that OpenAI effectively provided the government with a pass to operate freely in this gray area.

In other words, OpenAI is merely pretending to be the “good guy” to gain sympathy:

OpenAI signed precisely what Anthropic refused.

You have no credibility, and this was evident months ago.

This storm has changed not only the rankings but also the public’s expectations of AI companies.

People are no longer solely concerned with stronger and faster models; they are beginning to question: when technology can monitor millions and control unmanned weapons, where do you stand?

Perhaps Claude’s explosive popularity will eventually normalize, and OpenAI’s controversies will fade.

But from this moment on, AI giants can no longer hide behind the guise of “technological neutrality.”

In the balance between power and security, every AI giant must choose its position.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.