OpenAI recently launched its new large model, GPT-5.4-Cyber, which has drawn comparisons to Anthropic’s Claude Mythos. The similarities between these two models are striking, as both target similar user groups and application scenarios.

This trend of homogenization extends beyond the foundational models. A closer look at the recent products from both companies reveals that they are mirroring each other.

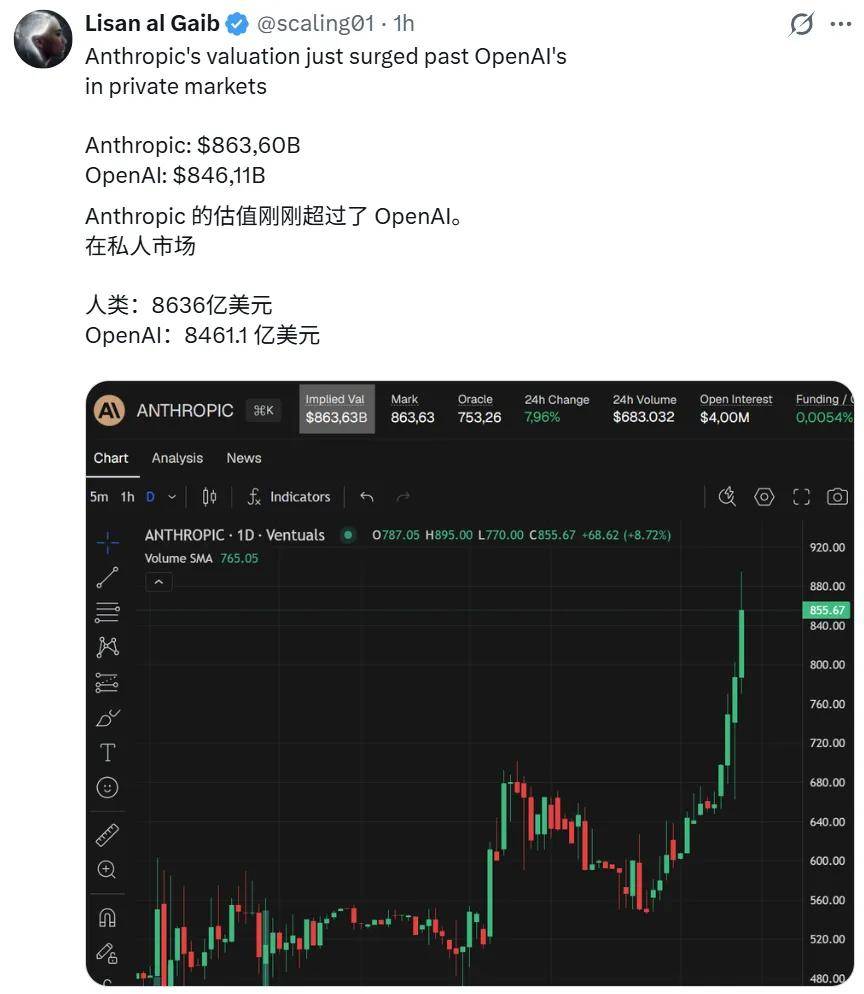

In the capital market, the competition is fierce, with both companies closely matched in valuation. Anthropic’s recent advancements in the enterprise market have even led to a slight edge over OpenAI. Investors see both companies as emerging with similar strengths.

The convergence of foundational models is likely to lead to similar applications.

Today, we will discuss two benchmark tools representing the pinnacle of AI-assisted programming: OpenAI’s Codex and Anthropic’s Claude Code. How have these tools evolved from distinct paths to become increasingly alike?

From Divergence to Convergence: The Evolution of Two Titans

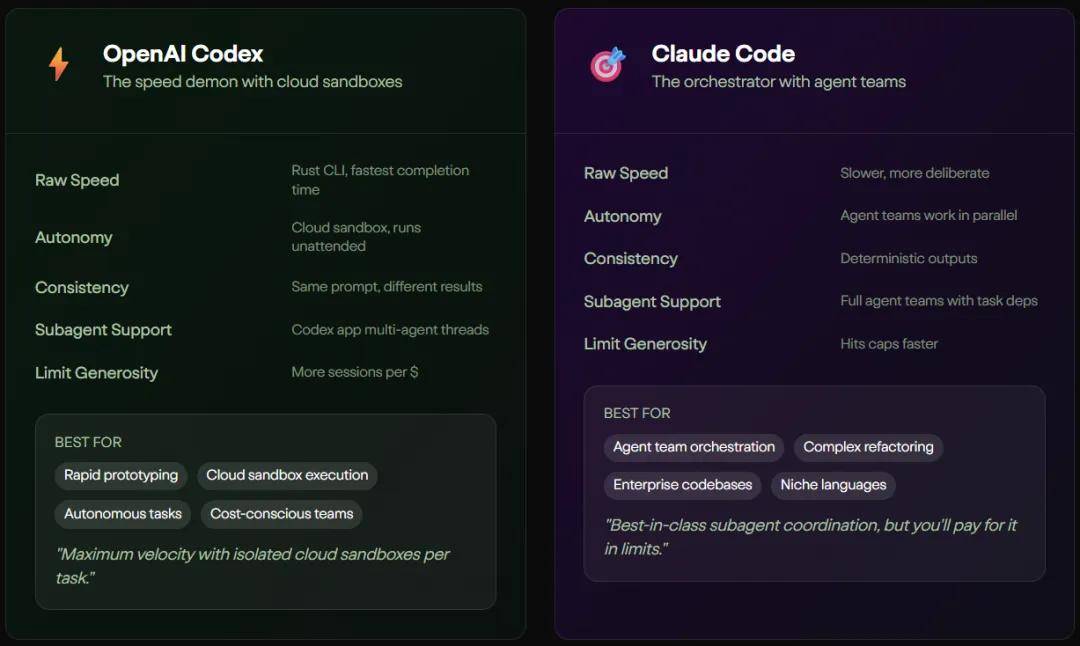

A few years ago, Codex and Claude Code emerged from different technological philosophies. Codex operates on the principle of speed, functioning like a seasoned developer ready to assist with code completion.

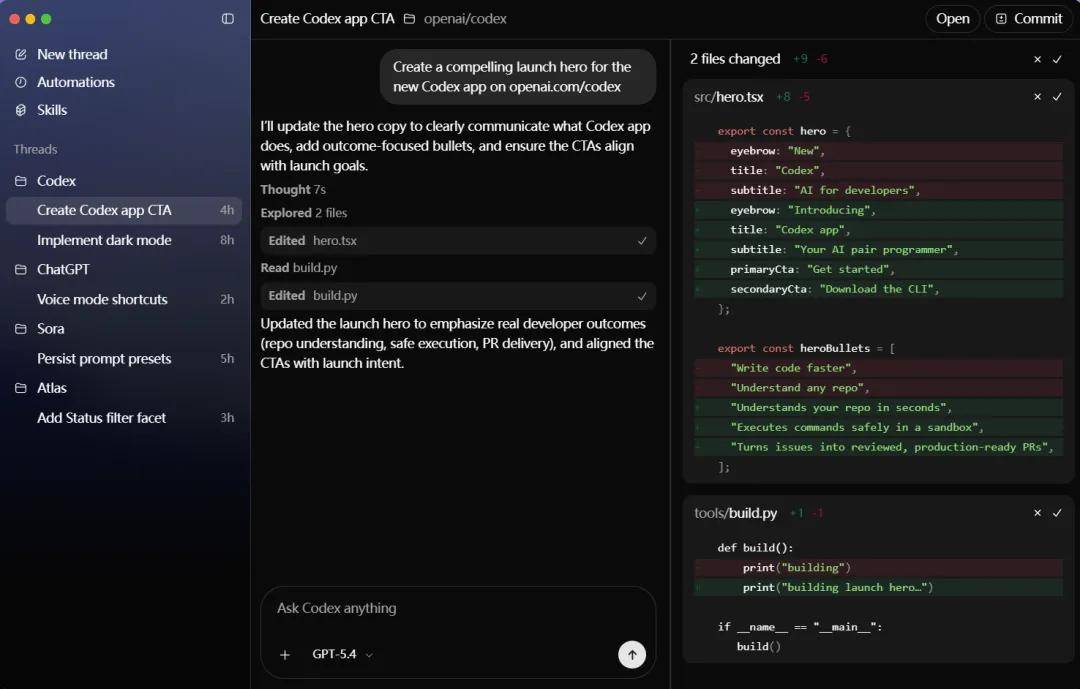

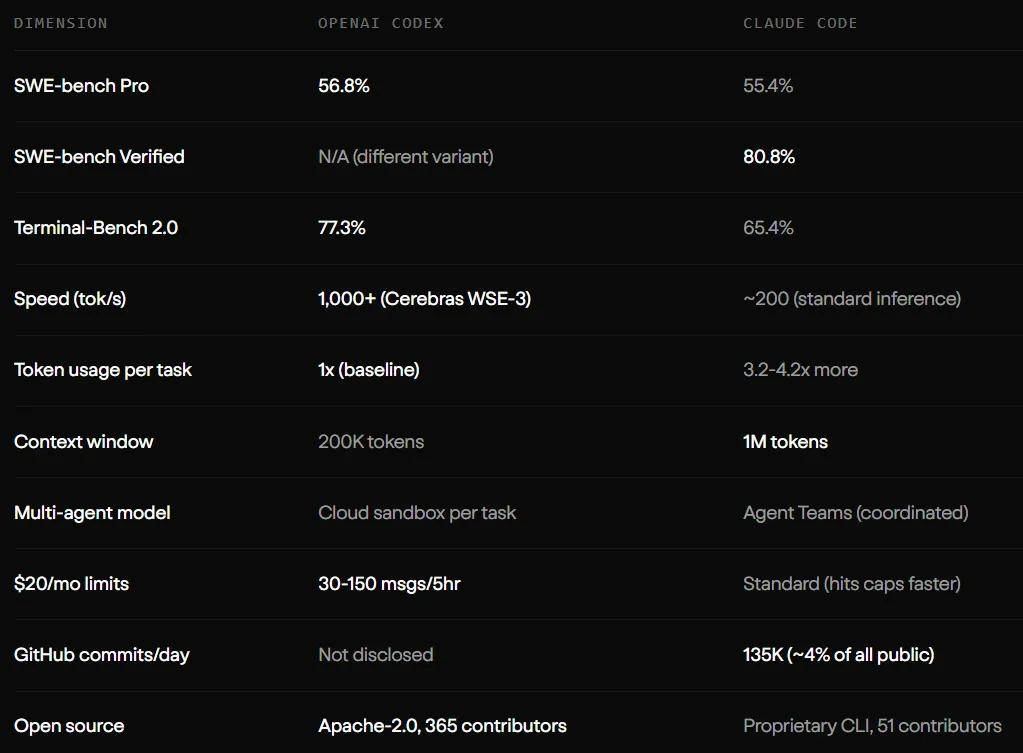

OpenAI envisioned Codex as a lightweight, highly interactive terminal agent, emphasizing rapid iteration and interactive programming. With the support of Cerebras WSE-3 hardware, it achieves a throughput of 1000 tokens per second. Codex offers suggestions, automatic edits, and fully automated approval modes, keeping developers engaged in the workflow. This design is ideal for developers needing to quickly prototype and handle high-frequency interactions.

In contrast, Claude Code was designed with a more reserved and architect-like approach.

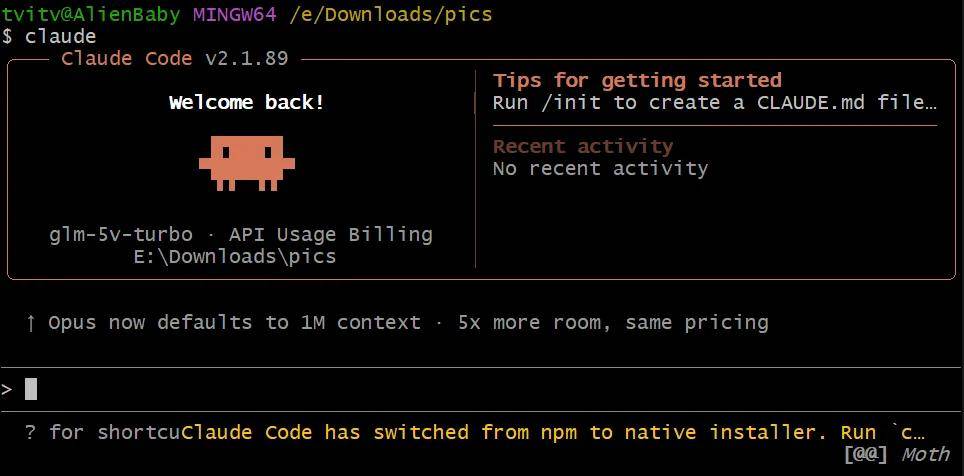

Anthropic embedded the capability to handle extremely complex tasks within Claude Code, utilizing a massive context window of up to 1 million tokens and unique compression techniques for limitless dialogue. Its guiding principle is to maintain global control and act with deliberation. Before taking any action, it comprehensively analyzes the entire codebase and coordinates modifications across multiple files, showcasing remarkable dominance in enterprise-level refactoring tasks involving thousands of lines of code.

However, as time has passed and application scenarios have expanded, these two originally distinct tools have begun to borrow from each other.

In complex projects, single AI models face the challenge of context pollution. When tasked with refactoring an authentication module, the AI may forget design patterns from earlier files after analyzing many others. To address this, both companies have proposed nearly identical solutions: assigning independent context windows for each sub-task.

OpenAI quickly launched a new macOS desktop application that isolates tasks by project across different threads, running independently in a cloud sandbox. Anthropic introduced a team of agents architecture, allowing developers to derive multiple sub-agents that share task lists and dependencies while working in parallel within their independent windows. Whether termed a “cloud sandbox” or “agent team,” the core engineering concepts have aligned.

Benchmark testing results also reveal a subtle balance between the two. GPT-5.3-Codex scored 77.3% in the Terminal-Bench 2.0 task, while Claude Code achieved 80.8% on the complex SWE-bench Verified leaderboard. Both excel in their respective strengths while striving to overcome their weaknesses.

The OpenClaw Effect: The Invisible Hand Breaking Down Barriers

While internal strategies have driven the convergence of these companies, external pressures from the open-source ecosystem cannot be overlooked. OpenClaw has had a profound impact on the AI programming tools landscape.

As a workflow framework introduced by the open-source community, OpenClaw has dismantled the ecological barriers established by tech giants. It standardizes the interaction between large models and local toolchains. Previously, integrating large models with local Git submissions or safely running test scripts in a sandbox were proprietary technologies of Codex and Claude Code.

However, OpenClaw abstracts these processes into a universal protocol, allowing developers to avoid being tied to specific platforms for collaboration. The open-source community’s enthusiasm has made standardization an irreversible trend. In response, both OpenAI and Anthropic have had to adapt to these open standards.

As the foundational technical barriers are leveled by OpenClaw’s open-source influence, and as advanced features become standard configurations, Codex and Claude Code’s only path forward is to refine user experiences at a granular level. This is why they increasingly resemble each other; within a standardized framework, optimal solutions often converge—similar to convergent evolution in biology.

Codex is Catching Up to Claude Code

Despite the converging evolution of Claude Code and Codex, differences remain, with Codex gaining favor among developers in certain aspects.

Recently, a senior engineer with 14 years of experience shared a rigorous evaluation in the r/ClaudeCode community. He dedicated 100 hours to using Claude Code and 20 hours to Codex on a complex project with 80,000 lines of code.

From his perspective, using Claude Code felt like guiding an engineer racing against a deadline; while it was fast, it often overlooked developer guidelines in CLAUDE.md and tended to pile on code within existing files, lacking a refactoring mindset.

In contrast, Codex felt more like a steady developer with 5 to 6 years of experience. Although its processing speed was 3 to 4 times slower, it would pause to think and refactor code, adhering strictly to instruction boundaries. This high level of autonomy allowed the engineer to delegate tasks confidently to Codex while focusing on other responsibilities.

Similar sentiments have emerged on social networks like X. Researcher Aran Komatsuzaki noted that while Claude Code excels in frontend tasks, Codex is more robust in backend planning and maintaining updated information through frequent web searches.

The comments section is filled with real-world experiences highlighting the trade-offs between the two tools. Developers have pointed out that while Opus-based models may run quickly, they often accumulate significant “code cleanliness debt.” Codex may be slower, but it effectively tidies up as it progresses. Some users even suggested a survival rule: initiate a new session when the context window usage reaches 70% to avoid hidden bugs.

These candid observations indicate that as the capabilities of these two tools converge, the final decision for developers often hinges on subtle differences in “debt management” and “maintenance mindset,” along with unique challenges faced by Chinese users.

Reflecting on the Homogenization Behind Ecological Warfare

Ultimately, the effectiveness of Codex and Claude Code also depends on the developers themselves. As noted in the evaluation by u/Canamerican726, both tools can yield poor results if the user lacks software engineering knowledge. Tools do not equate to skills.

This statement shatters the illusion perpetuated by AI programming tools. We once believed that with a powerful AI assistant, even a novice could create enterprise-level applications. However, Claude Code requires a highly focused and skilled “driver” to navigate a vast codebase effectively. While Codex is more independent, it also needs precise system context from developers to maximize its utility.

In an era of highly homogenized tool capabilities, where have these companies’ competitive advantages shifted?

The answer lies in their financial reports and pricing strategies. Claude Code often consumes 3 to 4 times the tokens as Codex for similar tasks, resulting in higher usage costs. For enterprise teams, Claude Code can cost between $100 and $200 per developer monthly, while Codex offers a more affordable subscription plan and has built a large user base through its extensive GitHub community.

Anthropic aims to deeply embed Claude Code into workflows of tech giants that can afford it. For instance, Stripe utilized Claude Code for 1,370 engineers to complete a cross-language code migration that would typically require ten people several weeks. Ramp has also leveraged it to reduce event response times by 80%. OpenAI, with its pervasive ecosystem, has made Codex the default choice for many regular developers.

This is no longer just a technical competition; it has become a war of ecosystem binding, pricing strategies, and reshaping user habits.

Developers at a Crossroads

Reflecting on the technological evolution over the past year, the release of GPT-5.4-Cyber is merely a small footnote in this ongoing battle. Codex and Claude Code are moving toward a “single face,” marking the transition of AI programming tools from an early stage filled with uncertainties and curiosities to a mature and mundane industrial production phase.

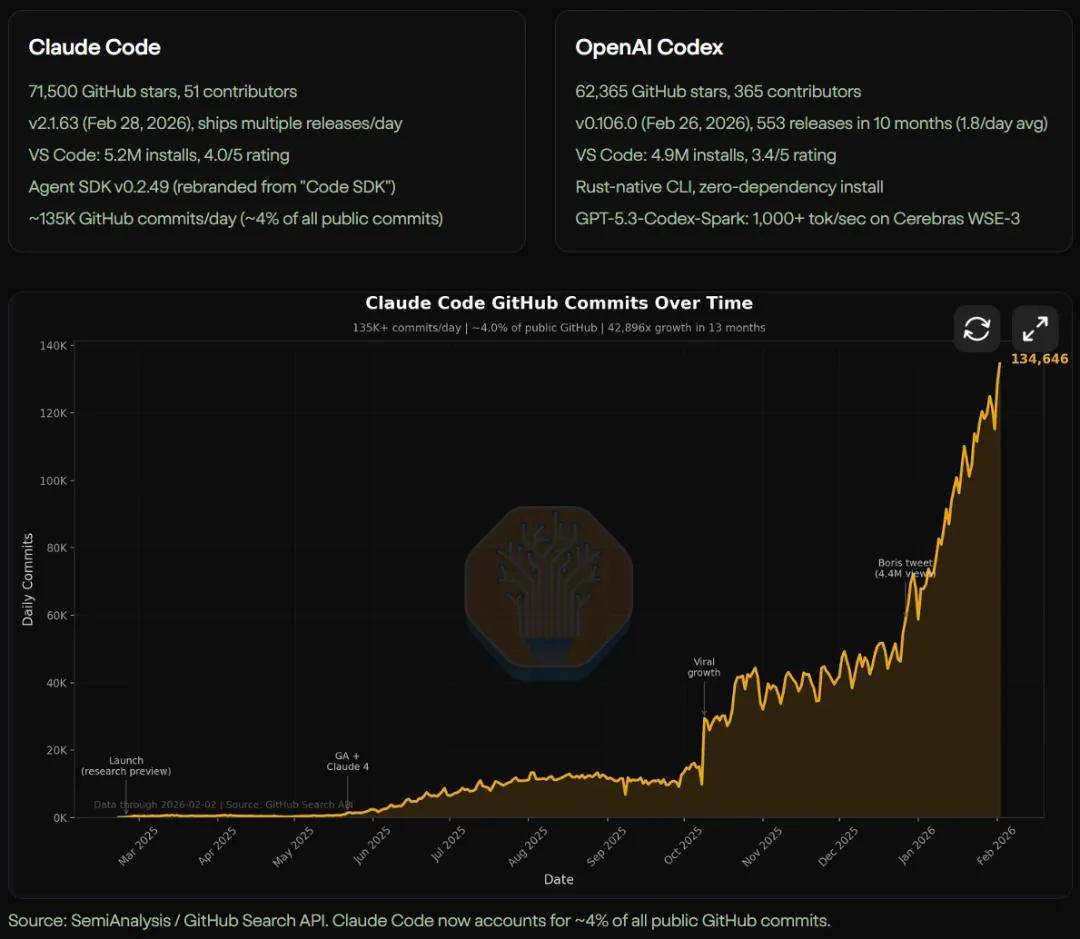

Currently, Claude Code generates 135,000 GitHub submissions daily, accounting for 4% of the total public submissions online. We can foresee that in the near future, most boilerplate code, basic test cases, and routine code refactoring will be handled by these increasingly similar AI agents in the background.

Faced with two super tools that are converging in capability and mimicking each other in experience, what remains of our core value as human developers? Perhaps the tool’s golden age is nearing its end. When everyone wields the same sharp weapon, the true determinants of success will no longer be who has better code completion speed, but who can better define problems, who possesses a broader system architecture vision, and who can find their unique irreplaceability in a code world filled with AI.

So, which one will you choose?

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.